Most powerful AI outperform humans in math challenges!

Published on July 1, 2024

Introduction to  MathVista

MathVista

MathVista is a comprehensive and meticulously crafted benchmark specifically designed to evaluate the mathematical reasoning capabilities of artificial intelligence models when they are presented with visual contexts. This pioneering benchmark was developed through a collaborative effort by esteemed researchers from the University of California, Los Angeles (UCLA), the University of Washington, and Microsoft Research. MathVista aims to bridge the existing gap in assessing AI's ability to not only solve but understand and interpret complex mathematical problems that inherently involve visual elements. The ultimate goal of MathVista is to push the boundaries of AI research by providing a robust framework that can challenge and improve the visual and mathematical reasoning skills of artificial intelligence systems.

How many categories of tasks has AI been tested on?

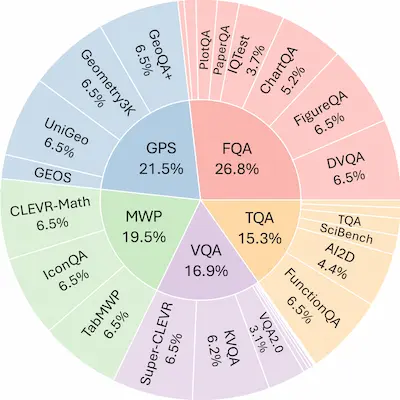

Based on the comprehensive information provided on the MathVista website, the benchmark tests evaluate AI models across a diverse range of category tasks. More specifically, the evaluation encompasses 5 main task types and an extensive array of 7 distinct mathematical reasoning types. These benchmarks are meticulously designed to assess the AI models' proficiency, accuracy, and capability in handling various mathematical challenges. The detailed breakdown of these tasks and reasoning types ensures a thorough and rigorous testing process.

5 Main Task Types:

Below are the 5 main task types evaluated by the MathVista benchmark:

- FQA: Figure Question Answering - AI models answer questions based on figures or diagrams.

- GPS: Geometry Problem Solving- AI models solve geometric problems.

- MWP: Math Word Problem - AI models tackle word problems involving mathematical concepts.

- TQA: Textbook Question Answering - AI models answer questions derived from textbook content.

- VQA: Visual Question Answering - AI models respond to questions based on visual content.

7 Mathematical Reasoning Types:

These 7 mathematical reasoning types provide a comprehensive framework to evaluate different aspects of AI's problem-solving capabilities:

- ALG: Algebraic - Involves solving equations and understanding algebraic expressions.

- ARI: Arithmetic - Focuses on basic arithmetic operations like addition, subtraction, multiplication, and division.

- GEO: Geometry - Deals with geometric shapes, properties, and theorems.

- LOG: Logical - Involves logical reasoning and problem-solving skills.

- NUM: Numeric - Pertains to understanding and manipulating numbers.

- SCI: Scientific - Incorporates mathematical concepts used in scientific contexts.

- STA: Statistical - Relates to data analysis, probability, and statistical reasoning.

Latest AI Scores

| Model | Score | Date |

|---|---|---|

| Human Performance* | 60.3 | 2023-10-03 |

| Gemini 1.5 Pro (May 2024) | 63.9 | 2024-05-17 |

| GPT-4o | 63.8 | 2024-05-13 |

| InternVL-Chat-V1.2-Plus | 59.9 | 2024-02-22 |

| Gemini 1.5 Flash (May 2024) | 58.4 | 2024-05-17 |

💡Human Performance*: Average human performance from AMT annotators who have high school diplomas or above.

The table highlights that the Gemini 1.5 Pro and GPT-4o models have surpassed average human performance in mathematical problem-solving tasks, with scores of 63.9 and 63.8 respectively.

Implications of These Results

The scores achieved by AI models like Gemini 1.5 Pro and GPT-4o indicate that AI has now surpassed average human performance in mathematical problem-solving tasks. This milestone demonstrates significant progress in AI's capability to handle complex and visually integrated mathematical challenges.

References

🚀 Visit AIMathSolver today and start solving your math problems with ease!